ORIGINALLY PUBLISHED 22nd October 2015

Hi,

As more and more of you start to use Nearline storage in your EasyTier pools, there are a couple of scenarios which I would like to describe to you to help you avoid some pitfalls.

Migrating from a single tier pool to a multi-tier pool containing Nearline.

So you have decided that EasyTier is the way to go for your business and after doing some analysis you’ve decided that you want to migrate to a 3 tier pool. After all you have a lot of idle data in your environment, why store it on expensive Enterprise when you could be keeping it on the Nearline which is cheaper. I focus on the 3 tier solution here – but the same applies to 2 tiers with Nearline Storage

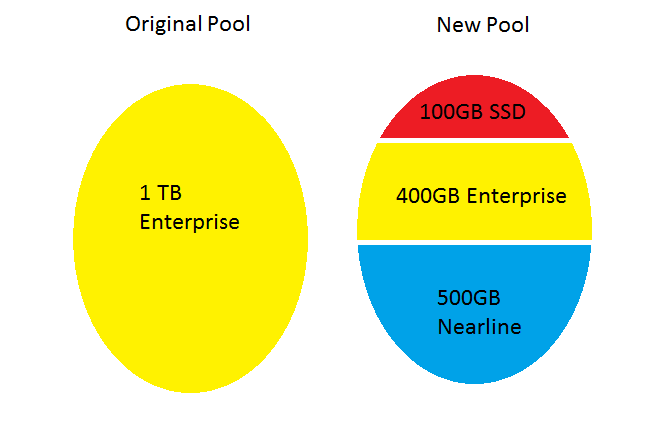

You decide to make this change as part of a storage refresh cycle – so rather than adding capacity to an existing pool – you create a brand new pool and start migrating the data from the old pool to the new pool

If we look at the picture above – it’s clear that the 1TB of data isn’t all going to fit into the 500GB of high performance space (400 Enterprise and 100 SSD) – so we need EasyTier to find the cold data and move it down to the Nearline space.

Unfortunately there are a couple of gotchas here:

- Easy Tier gives priority to workload which will increase performance. As a result – migrating data down to the nearline pool is given the lowest possible priority

- Data Migrations using Volume Mirroring, which are typically the recommended approach for data movement in SVC/Storwize, forgets all of the historical heat data for the extent and EasyTier has to re-learn the heat of the data once it’s been moved into the new pool

So what do these gotchas mean to you, and how can you avoid them.

Point 2 – Data Migration versus Data Mirroring

Point 2 is the easiest to explain, so I’ll start with it. If you use Data Migration using svctask migratevdisk – the EasyTier software will preserve both the existing tier of the data (wherever possible) and it will also remember the heat of that data when it is applied to the new pool. So in this case using data migration rather than mirroring *may* be slightly more efficient. However migratevdisk cannot be slowed down or stopped if the additional workload generated by the data movement causes a performance problem…. On the flip side it doesn’t take EasyTier very long to re-learn, so there’s no harm in using the volume mirroring approach – it will just add a day or two delay until EasyTier gets a really good understanding of your data again.

Point 1 – Demotion is low priority

Point 1 is a little more subtle. Hopefully it makes sense that when EasyTier has more work to do than it can complete – it will prioritise the work which it believes will give the biggest performance boost. And demoting cold data down to Nearline will not provide any performance boost at all – so it gives it the lowest priority. When you are in the middle of a large data movement exercise, there will be a lot of promotion to SSD and rebalancing between the enterprise tier managed disks which will improve the performance – so there will be very little resources given to demote the data down to the Nearline tier.

However you are in the middle of a mass data migration – trying to move all 1 TB of your data into this new pool.

- The first 400GB will go just fine – straight into the Enterprise Tier

- However I’ve now basically run out of space in the enterprise tier, the next 100GB I migrate is going to go straight into the Nearline pool – and potentially cause a significant application performance issue.

- EasyTier will sort this performance problem out over time, but it will take time – and that’s not good for your users or your business.

So what can you do about it?

- Before starting migrations to the new pool – make sure that there is enough space to store the entirety of that volume in the Enterprise tier. If there isn’t then I’d strongly recommend waiting until there is before starting the next migration

- Install V7.5 or later on your SVC/Storwize and enable the “EasyTier acceleration mode” on your system. This acceleration mode does two things:

- Performs data migrations at 4 times the speed of normal EasyTier operation (48 GiB every 5 minutes, rather than 12GiB every 5 minutes)

- Increases the priority of demotion to the same priority as rebalance and promotion. That means that if there is a lot of work to do demotion should be doing around 16GiB every 5 minutes for the entire system (assuming that your storage can go that fast)

- Alternatively – rather than creating a new pool you could always add all of the new capacity to your existing pool and slowly remove the old managed disks from the pool. You still need to worry about whether there is enough free capacity on the remaining Enterprise Tier managed disk before removing each managed disk – but if you can leave the pool with both old and new storage for a few weeks or months then ET will have already demoted data to the Nearline pool for you. The EasyTier acceleration mode will help in this scenario as well.

Remember that even if you turn on ET acceleration mode – you still need to make sure there is enough space before starting new migrations – but now the space should become available much more rapidly

Important: We do not recommend that you leave ET in acceleration mode all of the time. There is a real risk that migrating 48 GiB of data every 5 minutes could overload one or more of your managed disks and cause a performance problem – so turn it off when you’ve finished migrating between pools.

Adding a new managed disk to a pool with acceleration mode

This isn’t really Nearline Specific – but this seems like a good place to talk about this one.

Adding capacity to an existing tier

If you are running SVC or Storwize code releases earlier than V7.3, then when you add an additional managed disk in an existing tier of a storage pool then the existing volume data doesn’t get migrated onto the new managed disk. That means that the existing volumes don’t get the benefit of the additional performance provided by that new managed disk, and if the storage pool gets very full then that new managed disk can become a hot-spot.

The EasyTier changes in 7.3 added a feature called Pool Balancing. This is actively trying to make sure that each managed disk of the same tier in the pool is approximately equally loaded with IOPs and MiB/s. This means that when you add a new managed disk to the pool – EasyTier will automatically start moving data onto that new managed disk. This will hopefully improve the performance of the existing volumes and will avoid the potential performance hotspot in the future. The Pool Balancing feature is available on all SVC and Storwize systems – even for systems without an EasyTier License.

Some customers have noticed that this balancing doesn’t happen as quickly as they would like – so if you are anxious to make use of the performance from your new managed disk – turning on the EasyTier acceleration mode will make ET move data onto the new managed disk faster.

Adding a new tier

The EasyTier acceleration mode will also allow you to make use of a new tier more rapidly. Especially if the volumes have existed for a long time and EasyTier already knows what data it wanted to promote or demote.

Gentle Warning

Beware that migrating data faster with the acceleration mode can overload your backend storage, so be careful if using it during your busiest periods.

Space efficient or compression volumes with a Nearline tier

This is the most difficult one to give advice for, so I’ll simply give you the facts.

Thin Provisioning an EasyTier keep an amount of “emergency capacity” for each individual volume . This emergency capacity is used to keep the volume online in case the storage pool runs out of space. Once the emergency capacity for that volume runs out – the volume will go offline. There are more details about this in this earlier blog post

The emergency capacity on a new volume is the value of the rsize parameter when you create it. On an existing volume, the emergency capacity is the difference between the real capacity and the used capacity.

When new data is written to a thin or compressed volume, that data is often going to be stored on the “oldest” extent in that emergency capacity. If you have a large emergency capacity (the GUI default is 2% of the total volume capacity) then there is a high likelihood that the oldest extent has been demoted and is now living on the Nearline pool.

The only way of avoiding this is to have a small emergency capacity. If the emergency capacity is about 1 or 2 extents in size, then there’s a good chance that you will allocate the extent and start writing on it before the extent gets demoted down to Nearline storage. However this comes with a significant health warning, because now if the storage pool runs out of space, the volumes can run out of space and go offline much more rapidly!

I’m hoping that we can improve this in the future – but for now your options are to avoid a Nearline tier, or to have small emergency capacities on your volumes.

Leave a comment