You are prepared. You have the monitoring. You have the protection. You feel safe.

But if the worst happens and your data is held ransom after a latent ransomware trojan is triggered, how long would you take to recover?

You figure its OK, you have HA and/or DR… but those are now held ransom too…

You have the backups, lets recover from backup…

But how long would it take you to recover the data?

The industry average is 23 days… yes 23 days! Would you still have customers after 23 days of no access to your services, and you cannot access your supply chain, your invoicing, your stock data, your … well everything?!

With an IBM Cyber Vault design, you have the process and infrastructure, all underpinned by IBM Safeguarded copy in Spectrum Virtualize, that means you could recover in seconds. To learn more, read on…

IBM Cyber Vault

I discussed Cyber Vault briefly last year, the idea is quite simple. If you have a vaulted environment, some safe and secure compute, you can bring up a copy of an immutable point in time copy of your data that was take pre ransom, validate the data and instantly restore this to your production systems, eradicating the infected (encrypted) data. Sounds simple, and with all things Spectrum Virtualize, the generation of automatic immutable snapshots is simple to configure and automate.

The Cyber Vault process you define dictates what you do in your normal business day with those point in time copies. You may as well mount a copy, validate it, then you know you have a good point in time to revert to should you need to. In this way, you can recover in seconds.

Hopefully you get the idea and you can check out this solution brief for more details.

But in this post I wanted to talk about the new announced hardware platforms that are all enabled for IBM Safeguarded Copy, the feature at the heart of a Cyber Vault.

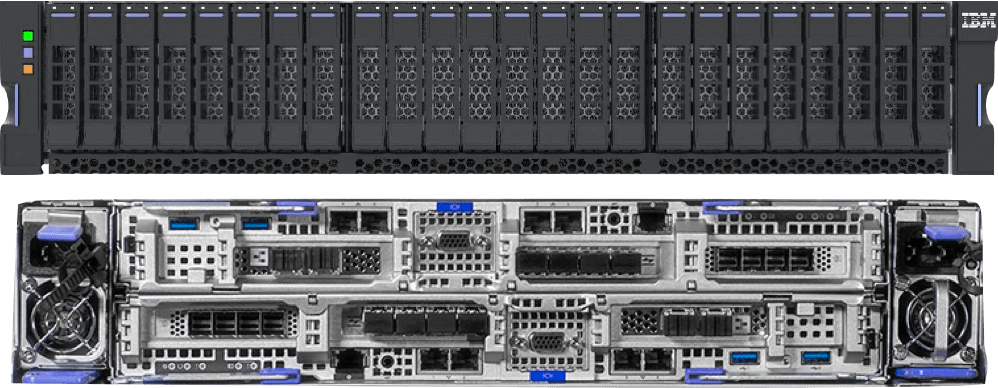

Introducing IBM FlashSystem “the beast” 9500

I’ve been calling this box “the beast” and for any ‘The Chase’ fan’s I’m not referring to Mark Labbett, but the new top of the line FlashSystem. With 48x NVMe slots, 12x Gen4 PCIe Host Interface slots (48x 32Gbit FC, 12x100GbE, 24x25GbE), 4x PSU, 4x Battery, 4x boot devices – this is the largest and most powerful FlashSystem we’ve produced, and based on the performance measurements it has to be one of, if not the fastest, highest bandwidth single enclosure systems in the world!

The control enclosure comes in at 4U in height, with two hot swap-able rear mounted node canisters. Each canister has redundant hot swap-able power supplies, batteries and Secure Boot EFI enabled boot devices, meaning any of these components can be swapped without powering off or removing the node canister itself.

The host interface cards are installed in cold swap-able cages. Each cage can have one or two PCIe cards, depending on the connectivity type, for example two FC or 25GbEcards, a mix of FC and 25GbE cards, or one 100GbE card. These cages do require the node canister to be powered off to replace, but changing the interface cards is not a frequent task – if ever.

The entire system is PCIe Gen4 based, giving double the bandwidth over Gen3, it allows the FlashSystem 9500 to achieve impressive performance numbers. Over 90GB/s read for example.

Speaking of Gen4, the large (19.2TB) and extra-large (38.4TB) self compressing FlashCore Modules (FCM) are now boasting Gen4 connectivity with individual drive bandwidths of over 3GB/s possible. See the FCM3 section below.

The internals have switched up to the Intel Ice Lake Gold Xeon processors, with 96 hyperthreaded cores per 9500. The cache comes as standard as 1TiB, but can be upgraded in two stages to 3TiB… These improvements and some code enhancements mean the hero number (read cache hit) jumps to 7.5 million IOPs. However, to keep it real, unlike some other vendors, a real life 70/30/50 transaction processing number is in excess of 1.5 million IOPs – more than enough for most people!

Introducing FlashSystem 7300

The 7300 is more of a traditional refresh over the 7200 it replaces. With an uptick in clock speed and the core count to 40 Cascade Lake Intel Xeons, the 7300 delivers around 25% more performance than its predecessor.

Added support for FCM3 and 100GbE host connectivity rounds off the enhancements at a hardware level. The box itself retains the 2U form factor, with 24x NVMe Gen3 slots.

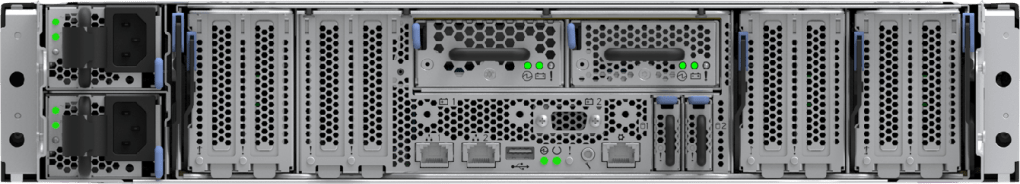

Introducing IBM SAN Volume Controller SV3 Node Hardware

The SV3 node hardware is essentially based off the same node canister you find in the 9500. So all the hardware details I outlined above come with the SV3. This means we go back to hot swap-able external batteries and boot devices (like SV1) and an increase to the host interface slots from 6 (SV2) and 8 (SV1) to 12 in the SV3.

Added support for 100GbE connectivity and the huge core (96) and cache counts (3TB) mean this is an SVC node unlike any before it in terms of performance capability.

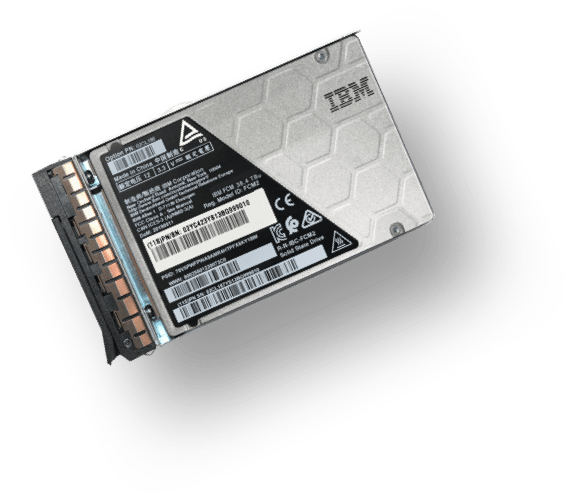

Introducing IBM FlashCore Module3 (FCM3)

I mentioned this above, but the latest generation of FCM that will be shipping in all newly purchased FlashSystem 5200,7300 and 9500 will be FCM3. These are PCIe Gen4 capable in the two larger capacities. The capacities stay the same, with 4.8, 9.6, 19.2 and 38.4TB modules available.

However, due to some clever management of the internal drive meta-data we can now achieve up to 3:1 compression on the larger two capacities, thus meaning, even when fully written, the 19.2 and 38.4TB modules can store over 57TB and 115TB respectively!

Internal computational storage capabilities have also been enhanced with a new “hinting” interface that allows the FCMs and the Spectrum Virtualize software running inside all FlashSystem products to pass useful information regarding the use, heat, and purpose of the I/O packets. This allows the FCM to decide how to handle said I/O, for example if its hot, place it in the pSLC read cache on the device. If its a RAID parity scrub operation, de-prioritise ahead of other user I/O.

Single FCMs are now capable of more IOPs than an entire storage array from a few years ago!

Conclusion

Phew, so thats a whole load to take in. The work by the IBM Storage development teams has yet again been amazing. During a global pandemic, to refresh the entire line of NVMe products really is no simple task with most people working remotely and not able to touch the hardware.

The family is set to take us into the new hopefully post-pandemic world, not only in terms of the latest and greatest NVMe based technologies, but also the ever present threats of Cyber-criminals.

If you haven’t looked at IBM Storage for a while, get in touch, we really have what I feel are the best set of solutions and capabilities of any vendor, and the price might just be a pleasant surprise.

If you are in the AP timezone, we have a webinar discussing Cyber Vault and the new FlashSystem products on the 15th February at 3pm NZ time (GMT+12) – feel free to register and I will see you there.

Leave a comment