ORIGINALLY POSTED 24th August 2017

10,961 views on developerworks

On Tuesday 22nd August we announced the latest code update that will be available soon for the Spectrum Virtualize family.

For the first time since 2012 we have bumped the major version number to 8. This signifies some exciting new features that begin with this release in 3Q and will be expanded greatly in the 4Q release later this year. But for now, what’s new in v8.1.0

Automatic Hot Spare Nodes

Last year we expanded on the scripted ‘warm standby’ node procedure by allowing you to configure spare nodes to a cluster. This didn’t automatically swap the nodes, for that you needed to run a command and make the swap decision for yourself. Now with 8.1 the system can automatically take on the spare node to replace a failed node or to keep the system whole under maintenance tasks such as software upgrades.

Up to 4 nodes can be added to a single cluster and they must match the hardware type and configuration of your active cluster Nodes. That is, in a mixed node cluster you should have one of each node type. Given that v8.1 is only supported on SVC DH8 and SV1 nodes this isn’t a huge deal. Most people upgrade the whole cluster to a single node type anyway. However in addition to the node type, the hardware configurations must match. Specifically the amount of memory and number and placement of Fibre Channel/Compression cards must match.

As a result of discussion with a couple of customers, if you do have a DH8 based cluster you will likely have to order some SV1 nodes to upgrade and free up some DH8 to use as spares as we have run out of supply of DH8 hardware!

The Hot Spare node essentially becomes another node in the cluster but isn’t doing anything normally. Only when needed does it use the NPIV nature of the host virtual ports to take over the personality of the failed node. There is about a minute before the cluster will swap in a node. This is because we don’t want to end up thrashing around when a node fails. We want to be sure it’s dead before we swap. Meanwhile because you have NPIV enabled the host shouldn’t see anything during this time. The first thing that happens is the failed nodes virtual host ports failover to the partner node. Then when the spare swaps in they fail over to that node. Obviously the cache will flush while only one node is in the IO Group but when the spare swaps in you gain full cache back.

One other thing to note is that we won’t take the spare when a node warm starts, i.e. a code assert or restart. Since that’s a transient error and the node will return in under a minute.

The other use case for Hot Spare Nodes is software upgrades. Normally the only impact during an upgrade maybe to performance while the node that is upgrading is down the partner in the IO Group will be writing through the cache and handling both nodes workload. So to work around this the cluster will take a spare in place of the node that is upgrading. Therefore the cache doesn’t need to go write through. Once the upgraded node returns it is swapped back so you end up rolling through the nodes as normal but without any failover and fail back seen at the multipathing layer. All of this is handled by the NPIV ports and so should make upgrades a less nervous time!

Only once the cluster has committed the new code level will the cluster take care of upgrading the spares to match the cluster level.

This feature has been talked about for years and has been high on our customers wish list. It needed NPIV capable hardware (the 16G cards and don’t forget to check your switches are NPIV capable) and the NPIV software management as a precursor but here we are finally.

Note also that this is an SVC only feature. While Storwize can make use of NPIV and get the general failover benefits you can’t get spare canisters. Oh and yes you can use them in site aware configs, i.e. Enhanced Stretched Cluster or HyperSwap by assigning a site to the spares also – though you will definitely want 2 spares here !

The spare nodes are also available as an option on the FlashSystem V9000 that use an external switch for Flash enclosure attachment.

Performance Enhancements

Since we introduced the DH8 hardware we have been making some use of the extra cores available. Mainly for isolating the GUI and base OS functions as well as compression. The main IO process code was mainly running on just 8 threads.

In v8.1 we have worked on the internal memory management and my performance architect successor has been beavering away to work on lock removal and other such code tuning to start unleashing the performance available by running some of the internal components on more threads in parallel across the SMP processor complex. This has resulted in up to 50% improvement in IOPs throughput on read miss workloads, as well as almost 40% improvement on mixed R/W IOPs workloads. (Measured on SV1 hardware – DH8’s see 40% and 36% respectively)

Simply upgrading to v8.1 when its available will unlock this new potential on your hardware. The same goes for FlashSystem V9000 products of course.

Cache Memory Increases

We told you it was coming, and recommended you purchase the new SV1 nodes with the full 256GB cache per node, and in v8.1 the system now makes full use of this memory. So all 256GB is available as read cache – the write cache sizes at this time remain unchanged.

In addition, those of you with Storwize V7000 Gen2+ (Model 624 canisters) can now upgrade to 128GB cache per canister, so 256GB per control enclosure.

If you are using compression it will still take its memory as needed and the rest will be available for cache to use.

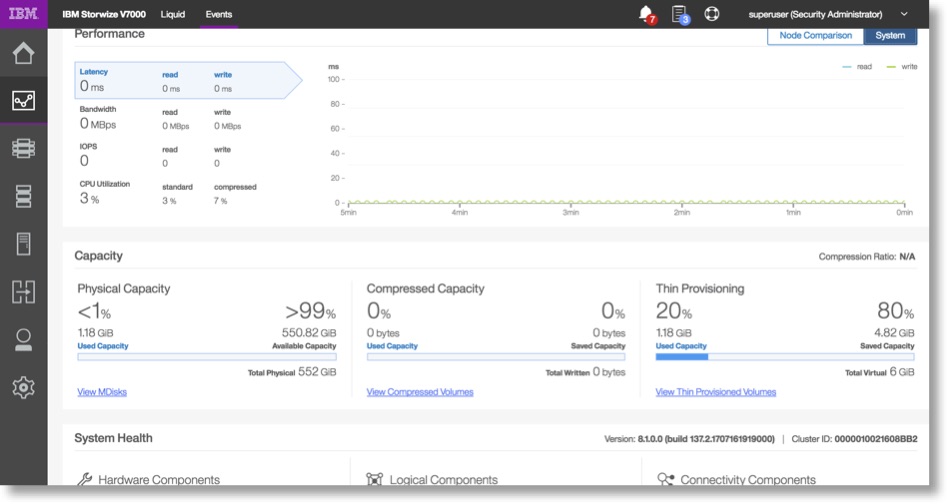

The New GUI

We’ve updated the GUI interface with the latest IBM Storage Design Language – with a common approach to fonts, colours, UI elements and user interaction with other IBM storage products. Here is a sneak peak at the interface showing the new dashboard view. I will cover this in more detail in a subsequent post.

The final set of items include an intergrated System Health dashboard in the new GUI, the ability for Remote Support Assistance (similar to what has been available on XIV and DS8000) which includes amoungst other things the ability to automatically upload support logs. Finally access to a cloud based health checker (this requires initially that the inventory email is turned on, so if you haven’t please do it now!)

Again, there is a lot there, so I will save the details for another post in the next few days.

v8.1.0 WILL NOT be supported on Gen1 Storwize Products, and older SVC Hardware

The last software release that supports the following products is v7.8.1 – continued software support for these products will be maintained only on the v7.8.1 code stream – so PTFs will continue with fixes, security updates etc but no new function.

These ‘gen1’ products include :

| “Gen1” | M/T | Models |

| SVC | 2145 | CF8, CG8 |

| V840 | 9846/8 | AC0 |

| V7000 | 2076 | 1xx, 2xx, 3xx |

| V5000 | 2077/8 | 24C, 12C, 24E, 12E |

| V3700 | 2072 | all models |

| V3500 | 2071 | all models |

Leave a comment