ORIGINALLY POSTED 27th May 2016

13,713 views on developerworks

Introducing Spectrum Virtualize Version 7.7.0 Software

This week I have been travelling in the Nordics. Despite now living in New Zealand, I can’t escape from the great User Group events that the Swedish team have been running for many years now, and its great to actually visit Stockholm in the ‘almost’ summer, rather than my usual visit in chilly December. On Tuesday we also held our first Spectrum Storage User Group in Denmark, and it was great to see over 50, new and familiar faces at this inaugural event which everyone agreed was a great success.

Coincidentally on Tuesday we also announced the latest version of Spectrum Virtualize (7.7.0) which will be available for download next month for SVC, Storwize FlashSystem V9000 and VersaStack.

Highlights of 7.7.0

- Improved continuous availability

- Node port failover using NPort ID Virtualisation (NPIV) to ‘failover at the fabric’ rather than host multi path failover.

- Lower cost storage and networking

- Virtualization of selected iSCSI attached storage

- Compressed data transfer for IP Replication links

- Improved performance

- Greater use of system memory for caching

- New flash and drive options

- 1.92TB lower cost Flash drive options (Ideal for Read Intensive workloads)

- Hybrid V9000 – attach up to two SAS enclosures of 8TB NL-SAS for up to 160TB usable RAID-6 in Easy Tier

- Extended offering of SVC direct attached storage including HDD storage

- New Differential Licensing

- ‘Value’ based licensing model significantly reducing the cost for virtualizing HDD based storage

- Including VSC licenses, and Spectrum Control

- Spectrum Virtualize Software Only

- Open beta program for ‘roll your own’ SVC software deployment

- Comprestimator and IP Quorum details integrated in GUI

- Support for Encryption on Distributed RAID arrays.

NPIV Support

The usage model for all Spectrum Virtualize products is based around 2-way active/active node models. That is a pair of distinct control modules that share active/active access for a given volume. These nodes each have their own Fibre Channel WWNN. Thus all ports presented from each node have a set of WWPNs that are presented to the fabric.

Traditionally, should one node fail or be removed for some reason, the paths presented for volumes from that node would go offline, thus we rely on the native OS multipathing software to failover from using both sets of WWPN to just those that remain online. While this is exactly what multipathing software is designed to do, it can be problematic, particularly if paths are not seen as coming back online for some reason. (Linux used to be terrible for this!) More importantly we are at the mercy of software outwit our control.

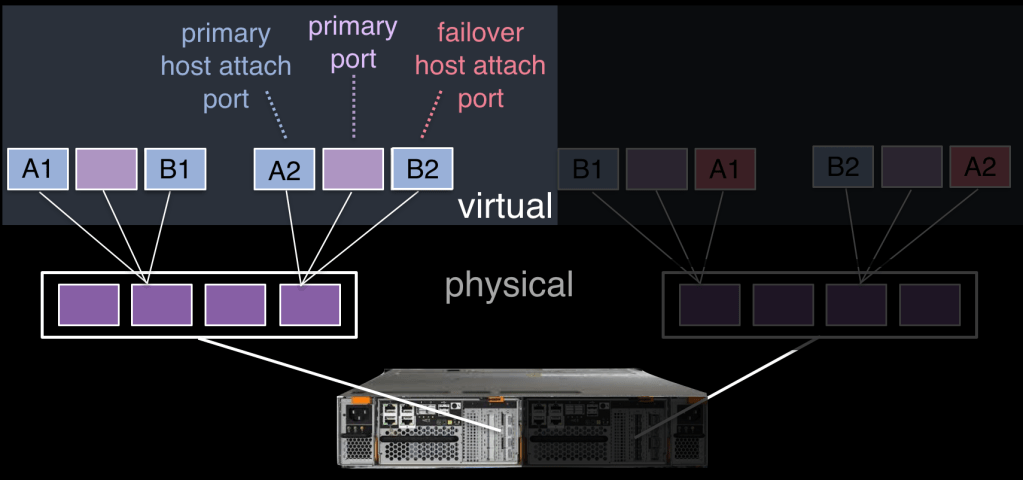

Starting from 7.7.0, we now can switch into a mode where we take care of this at the node level using NPIV. Essentially when enabled (and there is a transitional mode for backwards compatibility during the transition period) each physical WWPN will report up to 3 virtual WWPNs.

| Primary NPIV Port | This is the WWPN that will communicate with backend storage, and be used for node to need traffic. (Local or remote) |

| Primary Host Attach Port | This is the WWPN that will communicate with hosts. i.e. a target port only, and this is the primary port, therefore represents this local nodes WWNN. |

| Failover Host Attach Port | This is a standby WWPN that will communicate with hosts and will only be brought online on this node, if the partner node in this I/O Group goes offline. This will be the same as the Primary Host Attach WWPN on the partner node. |

Thats a lot of words, and hopefully the pictures below will make things much clearer!

Figure 1 : The Allocation of NPIV virtual WWPN ports per physical port (Note the example shows only 2 ports per node in detail, but the same applies for all Physical ports)

This shows the 3 virtual ports, note that the failover host attach port (in pink) is not active at this time. The second example shows what happens if the second node has failed. Now the failover host attach ports on the remaining node are active and have taken on the WWPN of the failed node’s primary host attach port.

Figure 2 : The allocation of NPIV virtual WWPN ports per physical port after a node failure.

In release 7.7.0, this all happens automatically when you have enabled NPIV at a system level. At this time, the failover only happens automatically between the two nodes in an I/O Group. However, a new command ‘swapnode’ can be used to take a spare node and swap that node into the cluster for any given node. This can be used to regain redundancy of the node failure is going to take some time to recover. You can expect further enhancements to the feature set that will make use of NPIV in the not too distant future.

I will cover the other features in a few more posts over the next week or so, for now I have the small matter of travelling back to New Zealand from Sweden… see you on the other side.

Leave a comment