ORIGINALLY PUBLISHED 13 November 2017

Background

It’s been a highly requested feature to have SVC or Storwize keep a single volume available in three sites. The most common use case is to have a pair of synchronous replication datacentres that provide zero data loss if you have a disaster at a single site, whilst also having a third copy of the data which is geographically further away to protect against the type of issues that can affect an entire city (for example).

We have had solutions for some time using the SVC stretched cluster to provide High Availability (HA) across two locations, combined with Global Mirror or Global Mirror with Change volumes to replicate to a third site. In the extreme, this third site can also be a stretched cluster giving you a 4 site solution.

However, working with clients over the last 12 months we have realised that we do have a solution that does allow a single volume mirrored to two different locations without the need to have Stretched Cluster. That’s the purpose of this blog.

Overview

The solution described here will work on SVC or Storwize. It requires 7.8.1 as a minimum code level. It is possible to perform the same solution on earlier code levels, however the solution is more complex. At the end of the blog post I will try to give you the differences needed if you need to implement this on earlier releases.

This solution is ideal for

- Customers who need a temporary third copy for data centre migration purposes

The basic idea is that you add a “scripted” copy of the data in site B, then remove the standard remote copy relationship from site B and create a new remote copy relationship to site C. This means you never have a time when you don’t have a DR copy at one of the sites. (You could achieve the same goal putting the “scripted” copy at site C as well)

- Scenarios where you want a copy of data at a site without any servers (sometimes known as a data bunker).

The reason for this advice is because the steps needed to fail back from the third site to the production site are more complex than normal replication – and are therefore more susceptible to human errors.

Author’s Note: There’s no chance I will get all of your potential questions covered in here – so feel free to ask away in the comments.

Basic Configuration

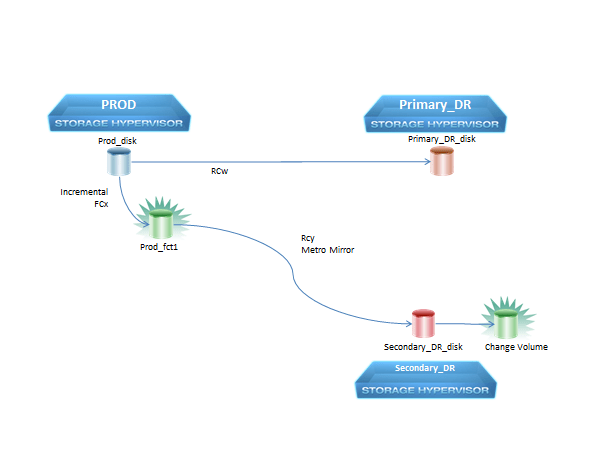

Below is a picture that shows the basic configuration needed for every volume that is replicated to three clusters.

Detailed Configuration

- In the picture above I indicate that the production is running at the cluster labelled PROD. This solution will work just as well if production is running at the site labelled Primary_DR

- Production and Primary DR disk can be any type of volume (Fully Allocated, Thin, Compressed)

- Prod_fct can be any type of volume (Fully Allocated, Thin, Compressed) and will contain a full copy of the data on Production

- The Change Volume at Secondary_DR should be Thin or Compressed to save space, and will only contain the changes between a cycle of the script, not a full copy of the data

- The replication between Prod and Primary DR can be

- Metro Mirror

- Metro Mirror with consistency protection

- Global Mirror

- Global Mirror with consistency protection

- GMCV

- Unfortunately this solution does not work with Hyperswap yet

- The relationship between Prod and Secondary_DR should be Metro

Mirror with consistency protection (i.e. have a change volume associated

with it).

- Because the metro mirror isn’t holding up any host IO – the latency of the link does not matter. GM and GMCV would work, but have additional overheads for no additional benefit.

- The FlashCopy between the Prod_disk and the Prod_fct MUST be an incremental FlashCopy with a non-zero background copy rate

If using Consistency Groups, the RCw consistency group MUST contain the same sets of volumes as the RCy consistency group and the FCx consistency group.

Normal Operation

In day to day operations, where all three sites are operational, a script is required to perform the following loop:

- Check that it’s still appropriate to run the replication loop.

- IMPORTANT: You must not start the next iteration of the loop if the data on Prod_disk is inconsistent.

The most likely scenario where this would happen is if you are using MetroMirror or Global Mirror and you are restarting a stopped relationship from Primary_DR to Prod

In this scenario, the RCw relationship will be inconsistent_<something>

The simplest solution is not to start the cycle if RCw is in the state inconsistent*. If you want to be a bit more advanced then you would also need to check whether the RC relationship is copying onto the prod disk or off the prod disk. - You should also do other checks would be environment specific (e.g. how will you know if you are running a DR test or even your production workload at the secondary DR site)

- IMPORTANT: You must not start the next iteration of the loop if the data on Prod_disk is inconsistent.

- Start FCx

- Start RCy

- Wait for RCy to reach 100% copied

- Wait for FCx to reach 100% copied

- Sleep until it is time to send the next copy

- Stop RCy

- Repeat

Implementation Notes

- The script will need a way to know which FlashCopy maps or consistency groups it should control,

- For step 1a it will need a way to discover the two Remote Copy relationships that are tied to the FlashCopy maps. This can either use naming conventions, or can be discovered by a script.

- How often will you add new relationships to your configuration? Do you want to have to stop (maybe modify a configuration file) and restart the script every time you add a relationship? If not, think about how the script can automatically detect the changes, and how often it should check.

-

Guidelines from Global Mirror with Change Volumes about how long to wait between cycles applies equally to this solution:

http://www-01.ibm.com/support/docview.wss?uid=ssg1S1010102

The best practice to avoid unnecessary delays to production is to run the cycles no more often that once every 5 minutes, but for small configurations with the latest hardware it might be acceptable.

-

IMPORTANT: This script needs to have some way to detect that a

disaster has happened and stop trying to replicate. There are several

ways this could be achieved, and they need to be integrated into your

operational handbooks. Some examples could include:

- Run a command on all three clusters at the start of the loop to ensure that all three clusters are operational.

- Have an operational team run the “chpartnership -stop” command to block the inter cluster partnership between PROD and Secondary_DR in the event of an emergency.

- If you are using other FlashCopies at either Prod or Secondary_DR then remember that stopping the FlashCopies can take many minutes to complete.

- Remember to check the return code of all CLI commands and handle SSH connection failures rather than just assuming that every CLI command is always successful,

Failure Scenarios

Prod cluster fails and you move production over to Primary_DR

In this scenario, the only two choices are:

- Wait until the Prod environment comes back online. Recovery steps once this has happened are:

- Restart the RCw relationship in the opposite direction to copy the changes back to Prod

- Once the RCw relationship reaches a consistent state, the script can start replicating that data to Secondary_DR

- At any point after the RCw relationship is back in a synchronized

state, the production workload can be moved back to the Prod Cluster.

- Set up a brand-new set of FlashCopy maps and Remote Copy relationships to mirror your data directly from Primary_DR to Secondary_DR, using either the standard replication technologies (If the Prod environment is unrecoverable) or using the scripted technology above if you expect the Prod environment to come back at some point.

Primary_DR cluster fails and production stays at Prod cluster.

No changes are needed to the environment. It will resume normal operations once Primary_DR becomes available again.

Secondary_DR cluster fails

No changes are needed to the environment. It will resume normal operations once Secondary_DR becomes available again.

Prod and Primary_DR clusters fail and you fail over to Secondary_DR

Bringing up the production at the Secondary_DR site will require this basic procedure:

- ESSENTIAL: Ensure that the script is stopped and cannot restart!

- Stop RCy with the “-access” flag on the CLI. This makes performs the following steps internally

- If the Change Volume is in use, the Change Volume will be copied back onto the Secondary_DR_disk

- The Secondary_DR_disk will become writable by the host

- For safety I recommend that you also run “chpartnership -stop” on the Secondary_DR cluster too – just to ensure that the script can’t start up again until you are ready

- Start up your production applications.

Copying data back to Prod once it is available

- Depending upon the quality of the link, you might want to change the RCy to either Global Mirror or Global Mirror with Change volumes to avoid impact to production performance

- Start RCy in the reverse direction to copy the data back to Prod

- Wait for the RCy relationship to reach either

- State is consistent_synchronized (MM or GM)

- The freeze_time to be less than 5 minutes old (GMCV)

- Stop the application and note the time

- If GMCV then wait for the freeze_time of RCy to be more recent than the time that you stopped the application

- Create a FlashCopy map from Prod_fct1 to Prod_disk

- Start that FlashCopy with the “-restore” flag

- If you don’t understand what the restore flag does I suggest you read up on it before trying to implement this solution.

- Start the application on the Prod_disk

- When ready start replication to Primary_DR using normal process

- Wait for the restore FlashCopy to finish

- Delete the FlashCopy from Prod_fct1 to Prod_disk

- Start up the script again.

If prod cannot be recovered then create new RC relationships with either Primary_DR or a new system and start replicating to the new system.

Spectrum Copy Data Manager

Spectrum Copy Data Manager already has software that allows you to keep FlashCopies in a remote SVC or Storwize system, and that software is basically running exactly the same logic that I described above.

I haven’t had a chance to play with their technology yet – but in theory you should be able to use Spectrum CDM to do most (if not all) of what I described above. Please let me know if you have practical experience doing this.

The solution on older code levels

The difference between the solution on 7.8.1 and the solution on older code levels is the Consistency Protection feature. Without this feature you will need extra work to manage a FlashCopy at the Secondary_DR site.

If you want to try and implement this on older code levels, then the main differences are:

- Your script will also have to control a snapshot FlashCopy at the Secondary_DR site after the RCy background copy has reached 100% complete

- If you fail over to Secondary_DR and the “Change Volme equivalent” FlashCopy is currently copying you will have to create and start a FlashCopy restore to copy from the change volume equivalent back onto Secondary_DR_disk before starting up production at Secondary_DR.

Acknowledgements

Thanks to everyone who helped get this Blog post ready – and for those of you who have been waiting for it, sorry I took so long.

Specific mentions for Tom Wagner, Bill Scales and Jason Heller

Leave a comment